16/03/2019

In our daily lives, we are constantly making judgments and decisions based on information, often involving probabilities. However, our intuition can sometimes lead us astray, particularly when dealing with statistical concepts. One such cognitive bias that frequently trips us up is the base rate fallacy. This fallacy occurs when people tend to ignore the general frequency or probability of an event (the base rate) and instead focus on specific, often vivid, pieces of information.

What is the Base Rate Fallacy?

The base rate fallacy is the error of reasoning in which a person incorrectly reasons from a general principle and misapplies it to a specific case. Essentially, it's about neglecting the prior probability of an event when evaluating new evidence. This often happens when the new evidence seems compelling or when the base rate is low, making it seem less relevant.

A classic example illustrating the base rate fallacy is the false positive paradox, also known as the accuracy paradox. This paradox highlights a counter-intuitive situation where a test that appears highly accurate can still produce more false positives than true positives. This occurs when the prevalence of the condition being tested for is very low.

The False Positive Paradox Explained

Imagine a medical test designed to detect a rare disease. Let's say the test is 99% accurate, meaning it correctly identifies 99% of those who have the disease (true positives) and correctly identifies 99% of those who don't have the disease (true negatives). The remaining 1% are errors: false positives (identifying a healthy person as sick) and false negatives (identifying a sick person as healthy).

Now, consider applying this test to a population where the disease is very rare, say, only 1 in 1,000 people have it. If we test 10,000 people:

- True Positives: 10 people have the disease, and the test correctly identifies 99% of them. So, 9 true positives.

- False Negatives: 1 person with the disease is missed by the test.

- True Negatives: 9,990 people do not have the disease. The test correctly identifies 99% of them. So, 9,990 * 0.99 = 9,890.1 (approximately 9,890) true negatives.

- False Positives: 9,990 people do not have the disease, but the test incorrectly identifies 1% of them as having it. So, 9,990 * 0.01 = 99.9 (approximately 100) false positives.

In this scenario, if you receive a positive test result, there are approximately 9 true positives and 100 false positives. This means that out of roughly 109 people who test positive, only 9 are actually sick. The probability of actually having the disease given a positive test is about 9 / 109, which is roughly 8.2%. Despite the test being 99% accurate, a positive result is far more likely to be a false alarm than a true diagnosis. This is the essence of the false positive paradox, driven by the low base rate of the disease.

Examples Illustrating the Base Rate Fallacy

Example 1: Disease Testing

Let's delve deeper into the medical scenario:

High-Prevalence Population

Consider a population where 40% of people are infected with a disease. A test has a 5% false positive rate and a 0% false negative rate.

| Infected | Uninfected | Total | |

|---|---|---|---|

| Test Positive | 400 (True Positive) | 30 (False Positive) | 430 |

| Test Negative | 0 (False Negative) | 570 (True Negative) | 570 |

| Total | 400 | 600 | 1000 |

In this high-prevalence population (40% infected), a positive test result means there's a 400 / 430 ≈ 93% chance the person is truly infected. The high base rate makes the test result highly reliable.

Low-Prevalence Population

Now, consider the same test applied to a population where only 2% are infected.

| Infected | Uninfected | Total | |

|---|---|---|---|

| Test Positive | 20 (True Positive) | 49 (False Positive) | 69 |

| Test Negative | 0 (False Negative) | 931 (True Negative) | 931 |

| Total | 20 | 980 | 1000 |

Here, in the low-prevalence population (2% infected), a positive test result means only 20 / 69 ≈ 29% chance the person is truly infected. The numerous false positives from the large uninfected group overwhelm the few true positives from the small infected group. This stark difference illustrates how the base rate dramatically impacts the interpretation of test results.

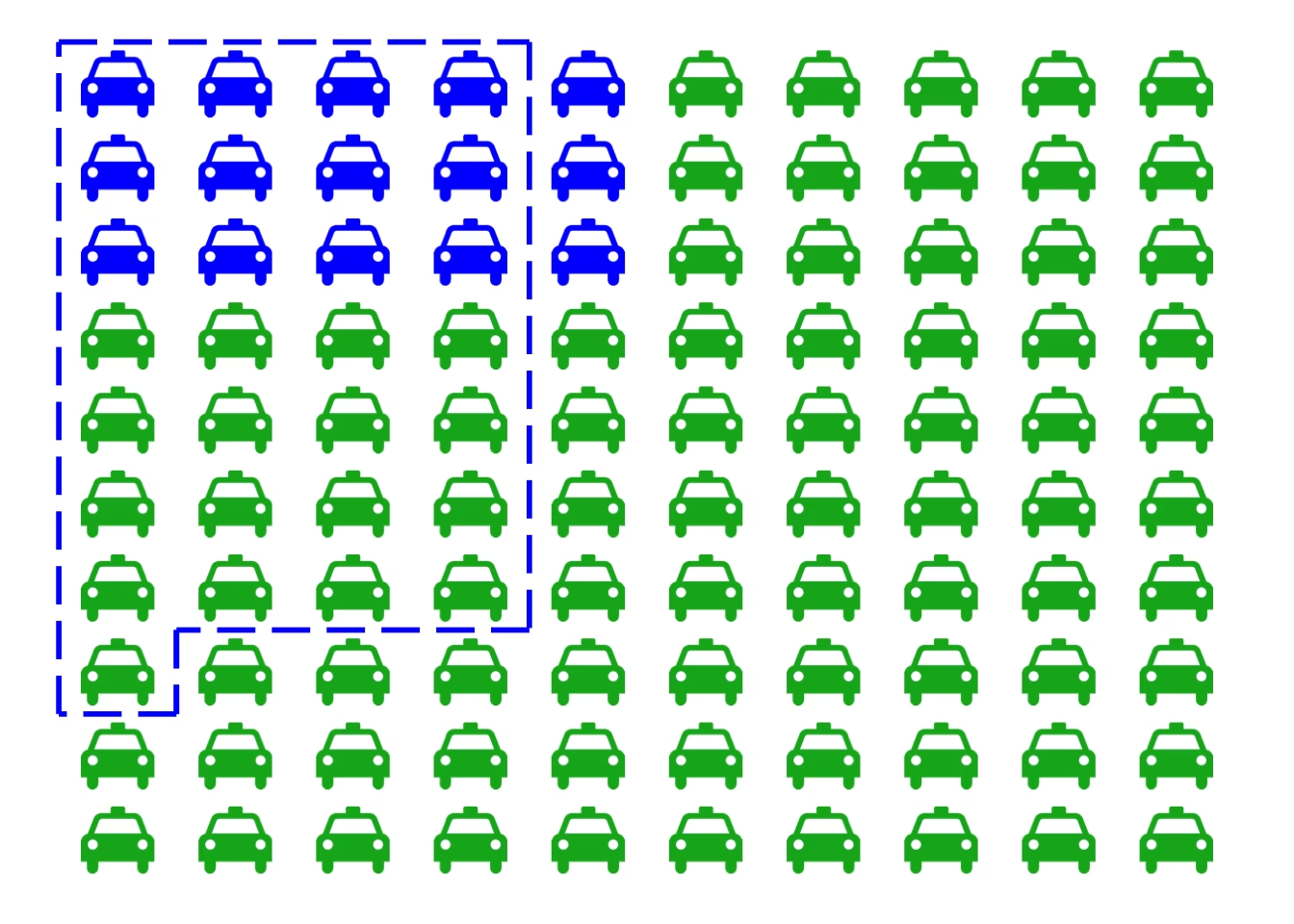

Example 2: Drunk Drivers

Imagine police use a breathalyser that has a 5% false positive rate for sober drivers but never fails to detect a truly drunk person. If 1 in 1,000 drivers is drunk, what is the probability a driver is drunk if the breathalyser indicates they are?

For every 1,000 drivers:

- 1 driver is drunk (100% detection rate = 1 true positive).

- 999 drivers are sober. With a 5% false positive rate, about 999 * 0.05 = 49.95 (approx. 50) sober drivers will falsely test positive.

So, out of approximately 51 positive test results (1 true + 50 false), only 1 is a true positive. The probability that a driver testing positive is actually drunk is 1 / 51, which is about 2%. Many might intuitively assume it's close to the 95% accuracy of the test, failing to account for the low base rate of drunk drivers.

Example 3: Terrorist Identification

Consider a city of 1 million people, with 100 terrorists and 999,900 non-terrorists. A facial recognition system has a 1% false positive rate and a 1% false negative rate (meaning it correctly identifies 99% of terrorists and non-terrorists).

If 1 million people pass by:

- Terrorists: 100 terrorists. With a 1% false negative rate, 1 terrorist is missed. With a 99% detection rate, 99 terrorists are flagged (true positives).

- Non-terrorists: 999,900 non-terrorists. With a 1% false positive rate, 999,900 * 0.01 = 9,999 non-terrorists are flagged (false positives).

If the alarm rings, it means a person has been flagged. The total number of people flagged is 99 (true positives) + 9,999 (false positives) = 10,098. The probability that a flagged person is actually a terrorist is 99 / 10,098, which is less than 1%! This demonstrates how a system with seemingly high accuracy can be overwhelmed by false positives when the target group is extremely rare.

Example 4: Biological Testing of a Suspect

Suppose a crime is committed, and the perpetrator has a blood type found in 10% of the population. A suspect is arrested and has this blood type. A prosecutor might argue that there's a 90% chance the suspect is guilty.

However, if the town has 1,000 people, then 100 people share that blood type. Only one of those 100 is the perpetrator. If the suspect was chosen randomly or based on weak evidence, the probability they are the perpetrator, based solely on the blood type match, is 1 in 100, or 1%. The prosecutor is confusing the probability of evidence appearing in an innocent person (10%) with the probability of the person being innocent given the evidence (which depends heavily on the base rate of people with that blood type in the relevant population).

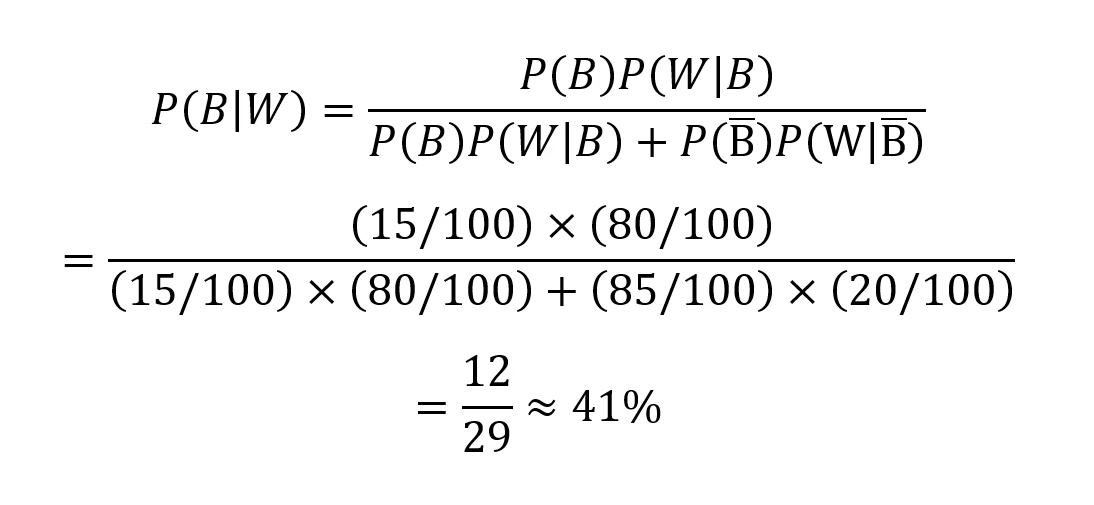

The Role of Bayes' Theorem

Bayes' theorem provides a mathematical framework for updating probabilities based on new evidence. It formally accounts for both the prior probability (base rate) and the likelihood of the evidence given different hypotheses. In the drunk driver example:

We want to find P(drunk | D), the probability a driver is drunk given a positive breathalyser test (D).

Bayes' Theorem: P(drunk | D) = [P(D | drunk) * P(drunk)] / P(D)

Where:

- P(drunk) = 0.001 (base rate of drunk drivers)

- P(sober) = 0.999

- P(D | drunk) = 1.00 (probability of positive test given drunk)

- P(D | sober) = 0.05 (probability of positive test given sober - false positive rate)

First, calculate P(D), the overall probability of a positive test:

P(D) = P(D | drunk) * P(drunk) + P(D | sober) * P(sober)

P(D) = (1.00 * 0.001) + (0.05 * 0.999) = 0.001 + 0.04995 = 0.05095

Now, apply Bayes' Theorem:

P(drunk | D) = (1.00 * 0.001) / 0.05095 ≈ 0.0196, or about 2%.

This calculation confirms the intuitive reasoning: the low base rate of drunk driving significantly reduces the probability of guilt even with a positive test.

Why Does the Base Rate Fallacy Occur?

Several psychological factors contribute to the base rate fallacy:

- Representativeness Heuristic: We tend to rely on stereotypes or how well a specific case matches a prototype, ignoring statistical base rates. If someone fits a profile, we might overemphasize that similarity.

- Availability Heuristic: Vivid, easily recalled information (like a dramatic positive test result) can overshadow more abstract statistical data.

- Focus on Specific Information: When presented with specific details about a case, we might feel compelled to use that information directly, rather than stepping back to consider the broader statistical context.

- Misunderstanding of Probabilities: Many people have a poor grasp of statistical concepts like conditional probability and the impact of sample size or prevalence.

Implications and Avoiding the Fallacy

The base rate fallacy has significant implications in various fields, including medicine, law, and artificial intelligence:

- Medical Diagnosis: Overestimating the likelihood of a disease based on a positive test without considering the disease's prevalence can lead to unnecessary anxiety and further testing.

- Legal System: Evidence like DNA or fingerprint matches can be misinterpreted. A prosecutor might present a low probability of a random match as a high probability of guilt, ignoring the base rate of innocent individuals who might possess that evidence.

- AI and Machine Learning: Predictive algorithms, especially those dealing with rare events (like fraud detection or identifying potential threats), can be highly susceptible to the base rate fallacy if not carefully designed. A system might generate a vast number of false positives for rare events.

To avoid the base rate fallacy:

- Always consider the base rate: Before interpreting new information, ask yourself about the general frequency of the event or condition.

- Use Bayes' Theorem: Understand how to update probabilities with new evidence, formally incorporating base rates.

- Be skeptical of high accuracy claims for rare events: Recognize that tests for rare conditions are prone to more false positives.

- Seek out statistical information: Don't rely solely on anecdotal or descriptive information.

Understanding the base rate fallacy is crucial for making more rational and accurate judgments in a world saturated with data and probabilistic information. By consciously accounting for base rates, we can better navigate the complexities of probability and avoid common cognitive traps.

If you want to read more articles similar to The Base Rate Fallacy: Understanding Probabilities, you can visit the Taxis category.