08/02/2023

In the rapidly evolving landscape of Artificial Intelligence (AI), systems are constantly faced with the challenge of making sense of incomplete or uncertain information. From autonomous vehicles navigating unpredictable roads to medical diagnostic tools interpreting complex patient data, the ability to reason under uncertainty is paramount. This is precisely where Bayes' Theorem, often referred to as Bayes' Rule or Bayes' Law, emerges as a cornerstone of modern AI. It provides a robust mathematical framework for updating our beliefs or the probability of an event occurring, given new evidence or information. More than just a formula, Bayes' Theorem embodies a logical approach to learning from data, making it an indispensable tool for developing intelligent systems that can adapt and make informed decisions in a world full of unknowns.

This article will delve into the intricacies of Bayes' Theorem, exploring its fundamental principles, its mathematical derivation, and its profound relevance across various domains within AI. We will dissect its core components, walk through a practical example of its application in AI, and highlight the myriad ways it empowers intelligent systems to reason probabilistically and achieve remarkable feats.

- What Exactly is Bayes' Theorem?

- Unpacking the Core Components of Bayes' Theorem

- The Mathematical Foundation: Deriving Bayes' Rule

- Why is Bayes' Theorem Indispensable in AI?

- A Practical AI Application: Spam Email Filtering

- Diverse Applications of Bayes' Rule Across AI Domains

- Frequently Asked Questions (FAQs)

- Conclusion

What Exactly is Bayes' Theorem?

At its heart, Bayes' Theorem is a concept from probability theory that articulates the relationship between the conditional probability of two random events and their marginal probabilities. In simpler terms, it offers a systematic method to calculate the probability of an event (let's call it A) happening given that another event (B) has already occurred. This is written as P(A|B). Crucially, Bayes' Theorem allows us to determine P(A|B) by leveraging our knowledge of P(B|A) – the probability of event B occurring given that A has already happened.

The formula for Bayes' Theorem is:

P(A|B) = (P(B|A) * P(A)) / P(B)

Let's break down each element of this powerful equation:

- P(A): This represents the prior probability of event A occurring. It's our initial belief or knowledge about event A before we consider any new evidence.

- P(B): This is the prior probability of event B occurring. It's the overall likelihood of observing the evidence B, irrespective of event A.

- P(A|B): This is the posterior probability of event A given that event B has occurred. It's the updated probability of A after we have taken into account the new evidence B. This is often the value we are trying to find.

- P(B|A): This is the likelihood of observing event B given that event A has occurred. It quantifies how probable the evidence B is if event A is true.

The theorem essentially provides a mechanism for updating our initial probability (P(A)) in light of new evidence (P(B|A) and P(B)) to arrive at a revised, more informed probability (P(A|B)). It's a cornerstone for statistical inference and probabilistic reasoning.

Unpacking the Core Components of Bayes' Theorem

To fully grasp the utility of Bayes' Theorem, it's essential to understand the distinct roles played by its four major elements:

Prior Probability (P(A))

The prior probability, denoted as P(A), is our initial belief or the probability of an event A before any new evidence is taken into account. It reflects our existing knowledge, assumptions, or historical data concerning the event. For instance, if we're trying to determine if an email is spam, P(A) could be the general probability that any given email is spam based on past observations. It's the starting point of our probabilistic journey, representing what we know or believe about A based on previous information.

Likelihood (P(B|A))

Likelihood, represented as P(B|A), is the probability of observing the evidence B given that event A has occurred. This component is crucial because it tells us how strongly the new evidence supports the hypothesis. For example, if A is "email is spam" and B is "the word 'free' appears in the email", then P(B|A) would be the probability of the word 'free' appearing in a spam email. A high likelihood suggests that the evidence B is very common when A is true.

Evidence (P(B))

The evidence, P(B), is the overall probability of observing the evidence B, regardless of whether event A is true or not. It acts as a normalising factor in the Bayes' Theorem formula, ensuring that the resulting posterior probability is a valid probability distribution (i.e., it sums to 1). Calculating P(B) often involves considering all possible scenarios under which B could occur, such as when A is true and when A is false (or other relevant events). It serves to contextualise the likelihood within the broader probability space.

Posterior Probability (P(A|B))

The posterior probability, P(A|B), is the revised belief or the updated probability of event A after considering the new evidence B. It answers the fundamental question: "What is the probability that A is true given that we have observed evidence B?" This is the outcome of the Bayes' Theorem calculation and represents our refined understanding of the event A, informed by the latest data. It's the culmination of integrating prior knowledge with new observations.

By combining these components, Bayes' Theorem allows AI systems to rationally update their beliefs and make more informed decisions as new evidence becomes available, making it an indispensable tool in machine learning and decision-making processes.

The Mathematical Foundation: Deriving Bayes' Rule

The elegance of Bayes' Rule lies in its derivation from the fundamental definition of conditional probability. Understanding this derivation helps solidify one's grasp of the theorem's logic.

Let's begin with the definition of conditional probability:

The probability of event A occurring given that event B has already occurred is:

P(A | B) = P(A ∩ B) / P(B)

Here, P(A ∩ B) represents the probability of both events A and B happening together (their intersection).

Similarly, we can write the conditional probability of event B occurring given that event A has already occurred:

P(B | A) = P(A ∩ B) / P(A)

Now, we can rearrange this second equation to isolate P(A ∩ B):

P(A ∩ B) = P(B | A) * P(A)

Since both of our initial conditional probability definitions are equal to P(A ∩ B), we can set them equal to each other:

P(A | B) * P(B) = P(B | A) * P(A)

Finally, to obtain the expression for P(A | B), we simply divide both sides of this equation by P(B):

P(A | B) = (P(B | A) * P(A)) / P(B)

This derivation clearly shows how Bayes' Rule is a direct consequence of the definition of conditional probability, providing a logical and consistent way to reverse the conditioning – that is, to find P(A|B) when we know P(B|A).

Why is Bayes' Theorem Indispensable in AI?

Bayes' Theorem is not merely an academic concept; it serves as a foundational pillar for numerous applications in Artificial Intelligence. Its ability to handle uncertainty and make decisions based on probabilities makes it profoundly relevant and often crucial for robust AI systems.

Probabilistic Reasoning

Many real-world AI scenarios involve inherent uncertainty. AI systems must often make decisions or draw inferences from noisy, incomplete, or ambiguous data. Bayes' Theorem provides a rigorous framework for probabilistic reasoning, allowing AI systems to update their beliefs about the state of the world as new evidence becomes available. This is vital for applications like autonomous vehicles, where sensors provide imperfect information about a constantly changing environment, requiring continuous belief updates to navigate safely.

Foundation for Machine Learning

Bayes' Theorem is the theoretical bedrock for a significant branch of Machine Learning, specifically Bayesian machine learning approaches. These methods enable AI models to incorporate prior knowledge or assumptions into their learning process and then update these beliefs as they are exposed to more data. This is particularly advantageous in situations with limited training data, or when dealing with complex, interdependent relationships between variables, leading to models that are robust and can quantify their uncertainty.

Classification and Prediction

In various classification tasks, such as identifying spam emails, diagnosing medical conditions, or categorising documents, Bayes' Theorem is a powerful tool. It allows AI systems to calculate the probability that a given input (e.g., an email, a set of symptoms) belongs to a particular class based on observed features. This probabilistic output allows for more nuanced and informed decisions compared to simple binary classifications, often providing a confidence score for each prediction.

Anomaly Detection

Identifying unusual patterns or outliers in large datasets is a critical task in many AI applications, including fraud detection, network security, and industrial monitoring. Bayes' Theorem can be effectively employed in anomaly detection by modelling the normal behaviour of a system. By quantifying the probability of observed data under normal conditions, deviations from this norm can be identified as potential anomalies, signalling unusual events or threats.

Overall, Bayes' Theorem equips AI with a powerful framework for navigating the inherent uncertainties of the real world, transforming raw data into actionable insights and enabling intelligent decision-making across a vast array of applications.

A Practical AI Application: Spam Email Filtering

One of the classic and most illustrative examples of Bayes' Rule in action within AI is its application in spam email classification. This demonstrates precisely how the theorem is used to determine whether an incoming email is spam or not, based on the presence of certain keywords or features.

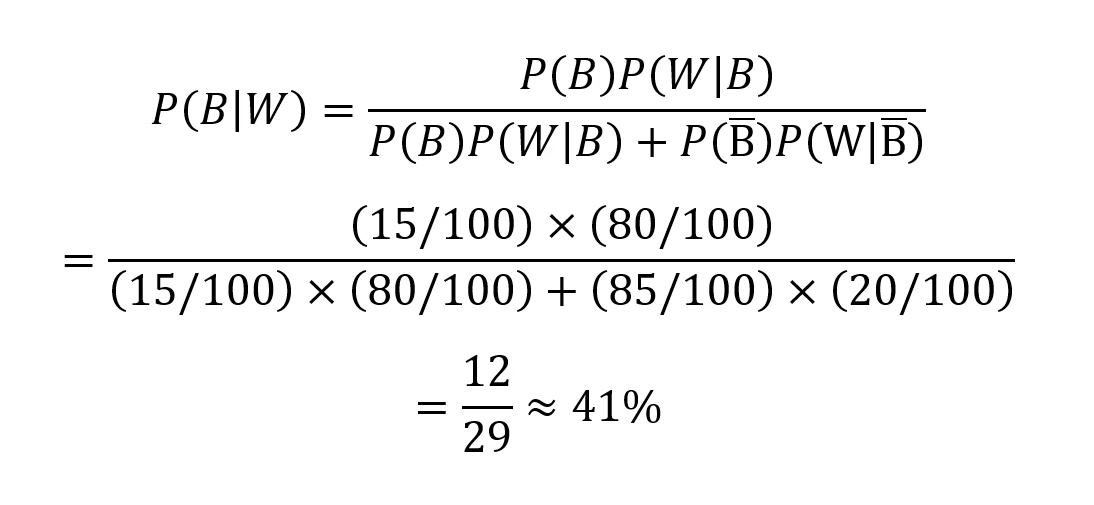

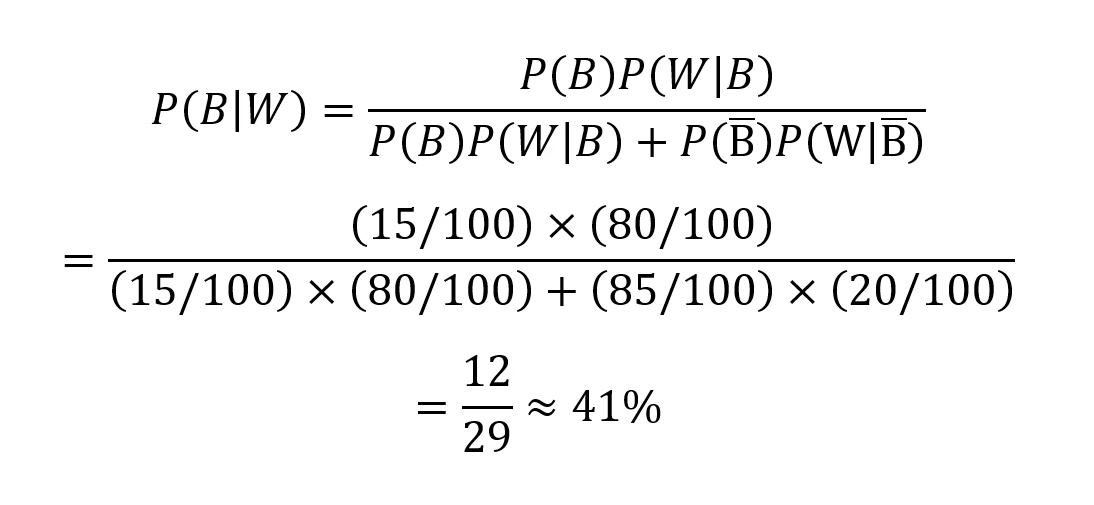

Consider a simple email filtering system designed to classify emails as spam (S) or non-spam (Ham, H) based on whether they contain the word "win" (W).

We are given the following probabilities, derived from historical data:

- P(S): The prior probability that any given email is spam. Let's say 20% of all emails are spam. So, P(S) = 0.2.

- P(H): The prior probability that any given email is not spam (ham). If 20% are spam, then 80% are ham. So, P(H) = 0.8.

- P(W|S): The probability that the word "win" appears in a spam email. Let's assume 60% of spam emails contain the word "win". So, P(W|S) = 0.6.

- P(W|H): The probability that the word "win" appears in a non-spam email. Let's assume only 10% of ham emails contain the word "win". So, P(W|H) = 0.1.

Our goal is to find P(S|W), which is the probability that an email is spam given that it contains the word "win". This is exactly what Bayes' Rule helps us calculate.

First, we need to calculate P(W), the overall probability that any email contains the word "win". We can use the law of total probability, which states that P(W) is the sum of the probabilities of "win" appearing in spam emails and "win" appearing in ham emails:

P(W) = P(W|S) * P(S) + P(W|H) * P(H)

Substituting our given values:

P(W) = (0.6 * 0.2) + (0.1 * 0.8) P(W) = 0.12 + 0.08 P(W) = 0.20

So, 20% of all emails, on average, contain the word "win".

Now, we can apply Bayes' Rule to find P(S|W):

P(S|W) = (P(W|S) * P(S)) / P(W)

Substituting the values we have:

P(S|W) = (0.6 * 0.2) / 0.20 P(S|W) = 0.12 / 0.20 P(S|W) = 0.6

The result, P(S|W) = 0.6, means that if an email contains the word "win," there is a 60% chance that it is spam. This is a significant increase from our prior belief that only 20% of emails are spam. The presence of the word "win" has updated our belief about the email's spam status.

In a real-world AI system like an email spam filter, this calculation would be part of a much larger, more sophisticated model that considers hundreds or thousands of words and other features (e.g., sender, formatting, links). The filter uses these probabilities, often combined with other algorithms, to classify emails accurately and efficiently. By continuously updating these probabilities based on new incoming data and user feedback (e.g., marking emails as spam), the spam filter can adapt to evolving spam tactics and consistently improve its accuracy over time, demonstrating the dynamic power of Bayes' Rule in a practical AI application.

Diverse Applications of Bayes' Rule Across AI Domains

Beyond spam filtering, Bayes' Theorem underpins a vast array of AI applications, enabling systems to make sense of complex data and act intelligently in uncertain environments. Its versatility makes it a favourite tool for researchers and developers alike:

Bayesian Inference

In Bayesian statistics, Bayes' Rule is used to update the probability distribution over a set of parameters or hypotheses using observed data. This is particularly important for various machine learning tasks, such as parameter estimation in Bayesian networks, hidden Markov models, and other probabilistic graphical models. It allows models to quantify their uncertainty about parameters, providing richer insights than point estimates alone.

Naive Bayes Classification

Widely used in natural language processing (NLP) and text classification, the Naive Bayes classifier employs Bayes' Theorem to calculate the likelihood that a document belongs to a specific category (e.g., sports, politics, positive sentiment) based on the words it contains. Despite its "naive" assumption of feature independence (that words appear independently of each other), it often performs surprisingly well in practice due to its simplicity and efficiency, making it a popular baseline model.

Bayesian Networks

Bayesian Networks are graphical models that visually represent probabilistic relationships among a set of variables using Bayes' Theorem. They are incredibly powerful for reasoning under uncertainty and are used in a variety of AI applications, including medical diagnosis (linking symptoms to diseases), fault detection in complex systems, and building sophisticated decision support systems. They allow for complex causal and correlational reasoning.

Reinforcement Learning

In reinforcement learning, where an agent learns to make decisions by interacting with an environment, Bayes' Rule can be used to model the environment probabilistically. Bayesian reinforcement learning methods allow agents to estimate and update their beliefs about state transitions and rewards. This enables them to make more informed and robust decisions, especially in environments where outcomes are uncertain or observations are noisy.

Bayesian Optimisation

For complex optimisation tasks, such as hyperparameter tuning in deep learning models, Bayes' Theorem can be used to represent the objective function as a probabilistic surrogate. Bayesian optimisation techniques leverage this model to iteratively explore and exploit the search space more efficiently than brute-force or grid search methods. It intelligently guides the search for optimal solutions, significantly reducing the computational cost of finding the best configuration.

Anomaly Detection

As mentioned earlier, Bayes' Theorem is a cornerstone for identifying anomalies or outliers in datasets. By modelling the normal distribution of data, deviations from this norm can be quantified using probabilities. This capability is critical for a wide range of applications, including detecting fraudulent transactions in finance, identifying unusual network activity to flag security threats, and monitoring machinery for signs of impending failure.

Personalisation and Recommendation Systems

In recommendation systems (e.g., for movies, products, or news articles), Bayes' Theorem can be used to continuously update user preferences. By observing a user's interactions (e.g., clicks, purchases, ratings), the system can refine its probabilistic understanding of their tastes. This allows for highly personalised recommendations that adapt over time, leading to more relevant content suggestions and an improved user experience.

Robotics and Sensor Fusion

In robotics, Bayes' Rule is fundamental for sensor fusion, the process of combining data from multiple sensors (e.g., cameras, lidar, ultrasonic sensors) to gain a more accurate and robust estimate of a robot's state or its environment. By integrating noisy and uncertain sensor readings probabilistically, Bayes' Theorem enables tasks like precise localisation (knowing where the robot is) and mapping (building a map of its surroundings), which are crucial for autonomous navigation.

Medical Diagnosis

In healthcare, Bayes' Theorem is a powerful component of medical decision support systems. It helps update the likelihood of various diagnoses based on new patient symptoms, laboratory test results, and medical history. By providing a probabilistic framework, it assists clinicians in making more accurate and informed diagnostic decisions, especially when faced with ambiguous or overlapping symptoms.

Frequently Asked Questions (FAQs)

What is the primary purpose of Bayes' Theorem in AI?

The primary purpose of Bayes' Theorem in AI is to enable systems to reason under uncertainty and update their beliefs or probabilities about events as new evidence becomes available. It provides a mathematical framework for learning from data and making informed decisions in complex, real-world scenarios where information may be incomplete or noisy.

How does Bayes' Theorem handle uncertainty in AI?

Bayes' Theorem handles uncertainty by allowing AI systems to quantify and update probabilities. Instead of providing a definitive 'yes' or 'no' answer, it calculates the likelihood of an event occurring given new evidence, allowing the system to express its confidence. This probabilistic approach is crucial for robust AI, as it accounts for the inherent variability and unpredictability in data and environments.

Can Bayes' Theorem be used for predictive modelling?

Absolutely. Bayes' Theorem is extensively used in predictive modelling. For instance, in classification tasks, it predicts the probability that an input belongs to a certain class (e.g., spam or not spam). In other models, it can predict future outcomes by updating probabilities based on historical data and new observations, making it a powerful tool for forecasting and risk assessment.

What is the difference between prior and posterior probability in Bayes' Theorem?

The prior probability (P(A)) is your initial belief or the probability of an event before any new evidence is considered. It represents what you know beforehand. The posterior probability (P(A|B)), on the other hand, is the updated or revised belief about the event after new evidence (B) has been observed. It's the outcome of applying Bayes' Theorem, reflecting a more informed probability.

Is Bayes' Theorem only applicable to AI?

While this article focuses on its applications in AI, Bayes' Theorem is a fundamental concept in probability and statistics with far-reaching applications across many fields. It is used in medicine for diagnosis, in finance for risk assessment, in legal contexts for evidence evaluation, in weather forecasting, and even in everyday decision-making, demonstrating its universal utility for updating beliefs with new information.

Conclusion

Bayes' Theorem stands as a monumental concept in the realms of probability and statistics, finding indispensable application across Artificial Intelligence, machine learning, data science, and countless other disciplines. It furnishes a powerful and elegant means of updating our beliefs and probabilities in the face of new evidence, thereby serving as a critical constituent of effective probabilistic reasoning. Its capacity to model and manage uncertainty is fundamental to the development of intelligent AI systems, enabling them to make nuanced decisions, recognise intricate patterns, and build robust probabilistic models of the world.

Understanding and adeptly applying Bayes' Theorem is not merely an academic exercise; it is essential for anyone seeking to develop or comprehend cutting-edge AI technologies. It empowers us to transition from making mere guesses to making informed, data-driven decisions, ultimately fostering the creation of AI systems that can reason intelligently and adapt effectively, even when confronted with the inherent ambiguities of the real world. Its enduring relevance ensures that Bayes' Theorem will remain a cornerstone of AI research and development for the foreseeable future.

If you want to read more articles similar to Bayes' Rule in AI: Navigating Uncertainty, you can visit the Taxis category.