06/02/2018

A dark, rainy evening in a bustling UK town. A sudden screech of tyres, a crash, and then silence, broken only by the distant wail of sirens. An accident has occurred, involving a taxi. But which one? In this town, two prominent taxi companies operate: the Green Taxi Co with its distinctive green cabs, and the Blue Taxi Co, whose fleet comprises 90% of all taxis on the road, easily identifiable by their blue colour. A lone witness, shaken but seemingly clear-headed, steps forward, claiming to have seen a green cab flee the scene. How much weight should we place on this testimony? This seemingly simple question opens the door to a fascinating journey into the world of probability, where initial assumptions can be profoundly misleading, and where a powerful mathematical tool known as Bayes' Theorem helps us navigate the complexities of evidence and likelihood.

- The Scene of the Incident: Initial Probabilities

- Understanding the Witness: Accuracy and Error

- Before the Testimony: What Would the Witness Likely Say?

- The Crucial Question: Was it Really Green?

- The Power of Bayes' Theorem: Beyond Intuition

- Implications for Real-World Scenarios

- Frequently Asked Questions (FAQs)

- What exactly is Bayes' Theorem?

- Why is the probability that it was a Green cab so low (around 30.77%) even if the witness said Green and is 80% accurate?

- Does this mean eyewitnesses are unreliable?

- How do prior probabilities affect the outcome?

- Could this scenario apply to something like a medical test result?

- Conclusion

The Scene of the Incident: Initial Probabilities

Before any witness speaks, we already possess crucial information about the taxi landscape in this town. This information forms our "prior probability" – what we know before any new evidence comes to light.

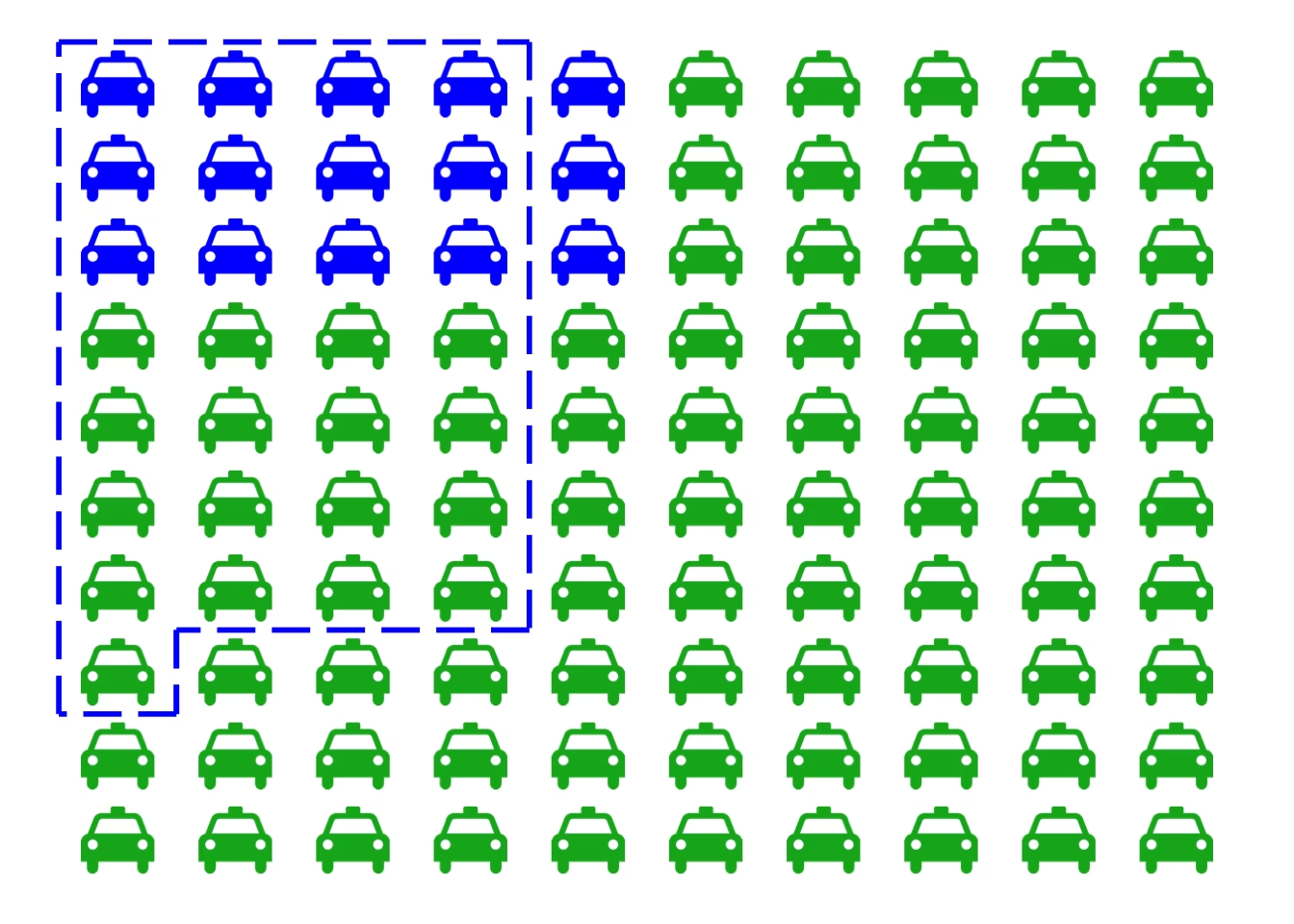

We know the following:

- Only 10% of the taxis in the town belong to the Green Taxi Co.

- A vast 90% of the taxis belong to the Blue Taxi Co.

In probabilistic terms, this means:

- The probability that a randomly chosen taxi is Green (P(Green)) = 0.10

- The probability that a randomly chosen taxi is Blue (P(Blue)) = 0.90

These figures are our starting point. If an accident occurs and we have no other information, it's far more likely to involve a Blue cab simply due to their sheer prevalence on the roads.

Understanding the Witness: Accuracy and Error

The human element is always a variable. The witness's testimony is vital, but how reliable is it, especially on a dark evening? To account for this, testing was conducted under similar conditions to that fateful night. The results revealed a significant insight into the witness's observational accuracy:

- There was an 80% chance that the witness would correctly identify the colour of a cab.

- Conversely, there was a 20% chance that the witness would incorrectly identify the colour.

It's important to note that this accuracy rate applies universally, regardless of the actual cab colour. So, if the cab was green, there's an 80% chance the witness would say "green" and a 20% chance they'd say "blue." The same applies if the cab was blue.

Before the Testimony: What Would the Witness Likely Say?

Let's pause for a moment and consider a hypothetical scenario: if an accident occurred, what is the overall probability that our witness, regardless of what actually happened, would claim it was a Blue cab? This question helps us understand the witness's propensity to report a certain colour, taking into account both the prevalence of the cabs and their own accuracy.

To calculate this, we need to consider two possibilities that lead to the witness claiming "Blue":

- The cab was actually Blue, AND the witness correctly identified it as Blue.

- The cab was actually Green, AND the witness incorrectly identified it as Blue.

Let's break down the probabilities:

- Probability of (Actual Blue AND Witness says Blue) = P(Witness says Blue | Actual Blue) * P(Actual Blue) = 0.80 * 0.90 = 0.72

- Probability of (Actual Green AND Witness says Blue) = P(Witness says Blue | Actual Green) * P(Actual Green) = 0.20 * 0.10 = 0.02

To find the total probability that the witness will claim it was a Blue cab, we add these two probabilities together:

P(Witness says Blue) = 0.72 + 0.02 = 0.74

So, even before hearing the specific testimony from this accident, there's a 74% chance that, if any accident occurred, the witness would have claimed it was a Blue cab. This is largely due to the overwhelming number of blue cabs on the road.

| Scenario | Probability Breakdown | Resulting Probability |

|---|---|---|

| Actual Blue, Witness says Blue | P(Correct ID) * P(Actual Blue) = 0.80 * 0.90 | 0.72 |

| Actual Green, Witness says Blue | P(Incorrect ID) * P(Actual Green) = 0.20 * 0.10 | 0.02 |

| Total Probability Witness Says Blue | 0.72 + 0.02 | 0.74 |

The Crucial Question: Was it Really Green?

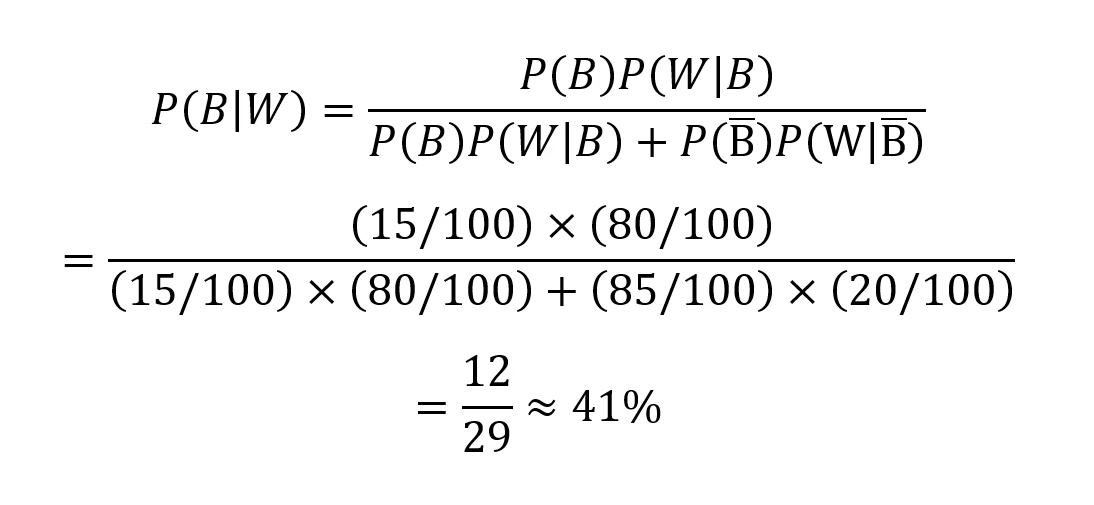

Now we arrive at the heart of the matter. The witness has definitively stated that the cab involved in the accident was Green. Given this new piece of evidence, what is the probability that the cab actually was Green? This is where Bayes' Theorem becomes indispensable. It allows us to update our initial beliefs (prior probabilities) with new evidence (the witness's testimony) to arrive at a more accurate posterior probability.

Bayes' Theorem is expressed as:

P(A|B) = [P(B|A) * P(A)] / P(B)

Where:

- P(A|B) is the posterior probability: The probability of A happening given that B has occurred (what we want to find: P(Actual Green | Witness says Green)).

- P(B|A) is the likelihood: The probability of B happening given that A has occurred (P(Witness says Green | Actual Green)).

- P(A) is the prior probability: The initial probability of A happening (P(Actual Green)).

- P(B) is the evidence probability: The total probability of B happening (P(Witness says Green)).

Let's plug in our values:

- P(Actual Green) = 0.10 (Prior probability of a green cab)

- P(Witness says Green | Actual Green) = 0.80 (Witness correctly identifies a green cab)

Now, we need P(Witness says Green). Similar to our calculation in the previous section, this involves two scenarios:

- The cab was actually Green, AND the witness correctly identified it as Green.

- The cab was actually Blue, AND the witness incorrectly identified it as Green.

- P(Actual Green AND Witness says Green) = P(Witness says Green | Actual Green) * P(Actual Green) = 0.80 * 0.10 = 0.08

- P(Actual Blue AND Witness says Green) = P(Witness says Green | Actual Blue) * P(Actual Blue) = 0.20 * 0.90 = 0.18

So, P(Witness says Green) = 0.08 + 0.18 = 0.26

Now, apply Bayes' Theorem to find P(Actual Green | Witness says Green):

P(Actual Green | Witness says Green) = [P(Witness says Green | Actual Green) * P(Actual Green)] / P(Witness says Green)

= (0.80 * 0.10) / 0.26

= 0.08 / 0.26

≈ 0.3077

This means that even though the witness claimed it was a Green cab, there is only approximately a 30.77% chance that it actually was a Green cab. This result often surprises people, as our intuition might lead us to believe the probability should be much higher given an 80% accurate witness.

| Component | Value | Explanation |

|---|---|---|

| P(Actual Green) | 0.10 | Prior probability of a Green cab. |

| P(Witness says Green | Actual Green) | 0.80 | Likelihood of witness saying Green if it was actually Green (correct ID). |

| P(Witness says Green | Actual Blue) | 0.20 | Likelihood of witness saying Green if it was actually Blue (incorrect ID). |

| P(Witness says Green) | 0.26 | Total probability of witness saying Green (0.08 + 0.18). |

| P(Actual Green | Witness says Green) | 0.3077 | Posterior probability, calculated using Bayes' Theorem. |

The Power of Bayes' Theorem: Beyond Intuition

The result from our calculation is a stark reminder of the power of Bayes' Theorem and how it challenges our innate cognitive biases, particularly the base rate fallacy. The base rate fallacy occurs when we neglect the prior probability (or base rate) of an event in favour of new, specific information.

In this taxi scenario, our intuition tends to focus heavily on the witness's 80% accuracy rate. "If they're 80% accurate, and they said Green, then it must be highly likely it was Green!" This line of thinking ignores the crucial fact that Green cabs are incredibly rare (10%) compared to Blue cabs (90%).

Even though the witness is mostly accurate, the sheer number of Blue cabs means that a significant portion of their incorrect identifications will involve mistaking a Blue cab for a Green one. Let's look at the numbers again:

- Out of 100 actual Green cabs, the witness would correctly identify 80 as Green.

- Out of 100 actual Blue cabs, the witness would incorrectly identify 20 as Green.

Now, consider the actual proportion of cabs:

- If there are 10 Green cabs in town, the witness would correctly identify 0.80 * 10 = 8 of them as Green.

- If there are 90 Blue cabs in town, the witness would incorrectly identify 0.20 * 90 = 18 of them as Green.

So, out of all the times the witness says "Green" (8 + 18 = 26 times), only 8 of those instances actually involved a Green cab. This is 8/26, which is approximately 0.3077, exactly what Bayes' Theorem tells us.

This demonstrates that the prior probability (the rarity of Green cabs) plays a profound role in shaping the posterior probability, even when we have seemingly strong evidence. The witness's testimony, while important, is filtered through the lens of the underlying distribution of cabs.

Implications for Real-World Scenarios

The taxi accident problem is a classic illustration of Bayes' Theorem, but its applications extend far beyond hypothetical car crashes. This probabilistic framework is fundamental in numerous real-world fields:

- Medical Diagnostics: When a diagnostic test for a rare disease comes back positive, Bayes' Theorem helps determine the actual probability of having the disease, considering the disease's prevalence (prior probability) and the test's accuracy (likelihood). Often, a positive result for a rare disease still means it's more likely to be a false positive than a true positive, due to the low base rate of the disease.

- Legal Proceedings: Assessing the reliability of eyewitness testimony or forensic evidence. Just as with our taxi witness, even a highly accurate witness might lead to misleading conclusions if the event they're testifying about is inherently rare or if there's a strong base rate against it.

- Spam Filtering: Email providers use Bayesian filters to identify spam. They calculate the probability that an email is spam given the presence of certain words, based on the prior probability of an email being spam and the likelihood of those words appearing in spam vs. legitimate emails.

- Machine Learning and AI: Many algorithms, particularly in natural language processing and image recognition, are built upon Bayesian principles to update probabilities as new data is encountered.

Understanding these principles helps us make more rational decisions, moving beyond gut feelings and intuitive leaps to a more evidence-based approach.

Frequently Asked Questions (FAQs)

Here are some common questions that arise when discussing this type of probability problem:

What exactly is Bayes' Theorem?

Bayes' Theorem is a mathematical formula that describes how to update the probability for a hypothesis as more evidence or information becomes available. It's particularly useful when you have an initial belief (the prior probability) and you want to adjust that belief based on new observations. It helps us calculate the "posterior probability" – the updated probability after considering the evidence.

Why is the probability that it was a Green cab so low (around 30.77%) even if the witness said Green and is 80% accurate?

This is the core insight provided by Bayes' Theorem and illustrates the base rate fallacy. The reason the probability remains relatively low is due to the overwhelming "prior probability" or "base rate" of Blue cabs. Even with an 80% accurate witness, the 90% prevalence of Blue cabs means there's a significant chance that the witness misidentified a Blue cab as Green. Specifically, the chance of an incorrect identification from a Blue cab (0.20 * 0.90 = 0.18) is actually higher than the chance of a correct identification from a Green cab (0.80 * 0.10 = 0.08) because there are so many more Blue cabs to potentially misidentify.

Does this mean eyewitnesses are unreliable?

Not necessarily "unreliable" in all contexts, but it highlights that eyewitness testimony should always be considered in conjunction with all other available information, especially prior probabilities. A witness's accuracy rate alone doesn't tell the whole story. In situations where the event being witnessed is rare, or when there are many other possibilities, the reliability of the testimony needs to be carefully evaluated using tools like Bayes' Theorem.

How do prior probabilities affect the outcome?

Prior probabilities are crucial. They represent our initial knowledge or belief about the likelihood of an event before any new evidence is introduced. If the prior probability of an event is very low (like the Green cab in our scenario), even strong evidence might not raise its posterior probability as much as we intuitively expect, because the "baseline" likelihood is so small. Conversely, if the prior probability is very high, it takes very strong contradictory evidence to significantly lower it.

Could this scenario apply to something like a medical test result?

Absolutely. It's a classic example. Imagine a rare disease that affects 1 in 1,000 people. A test for this disease is 99% accurate (meaning 1% false positives and 1% false negatives). If you test positive, what's the probability you actually have the disease? Many people would guess high, but applying Bayes' Theorem shows the probability is often much lower than expected because the disease is so rare (low prior probability). Most positive results would be false positives.

Conclusion

The mystery of the dark night taxi accident serves as a compelling illustration of how our intuitive understanding of probability can sometimes lead us astray. By systematically applying Bayes' Theorem, we move beyond simple assumptions and incorporate all available information – the prevalence of different taxi companies and the known accuracy of the witness. The result is a more nuanced and accurate assessment of the situation. While the witness's claim of a Green cab might seem convincing at first glance, the underlying statistical landscape reveals that the odds still lean heavily towards the involvement of a Blue cab. This powerful analytical tool teaches us the critical importance of considering prior probability and likelihood when evaluating evidence, ensuring that our conclusions are based on a comprehensive understanding of all factors at play.

If you want to read more articles similar to Unmasking the Cab: The Dark Night Crash Probability, you can visit the Taxis category.